- Home

- Our work

- Indicators, measurement and reporting

- Patient experience

- Implementing Patient Experience

- Stage 3 – Using the data

Stage 3 – Using the data

PREMs are recommended as a resource to prioritise and inform local safety and quality improvement, to stimulate meaningful discussion with consumers, and to help organisations to keep track of their move towards patient-centred care.

Stage 3 suggests methods to help in translating PREM responses collected from your patients into quality and safety improvements that patients can see and feel. Although this stage is about how you use the information you gain from patients, the steps should be thought through well in advance of getting the PREM to patients.

The decisions you have made in Stages 1 and 2 will shape your decisions and actions in Stage 3. Most importantly, the way you analyse and interpret the raw data, and the way your organisation reports and acts on the findings of that analysis, must be consistent with the objectives you set up in Stage 1.

Stage 3 Outcomes

By working through the steps in this stage, you will be able to:

- Decide whether and how to set automated triggers for action based on PREM responses

- Decide on a data analysis strategy

- Think through how to present results, who to and how often

- Think through how to translate PREM results into improvement in safety and quality of care

Outcome

By completing step 3.1 you will have a set of triggers for action that will help you ensure that the PREM implementation and operation achieve the objectives you have defined. You will also have decided how to communicate these across your organisation.

Things to consider

This section lists the items that need to be considered in Step 3.1 to identify a set of triggers for action.

Parameters

Consider the parameters that you need to monitor in your organisation to determine whether you are on track to achieve your organisation’s PREM objectives. Parameters may include:

- Pre-administration attrition rate

Attrition can lead to sampling bias. The attrition rate is the proportion of eligible patients that cannot be administered the survey because- they do not consent to being sent a survey

- the organisation does not have the required information about the patient to administer the survey (for example, mobile phone number if the survey is administered by text message, or email address if administered by email).

- Response rate and completion rate

This is the proportion of eligible patients receiving the survey who respond partially or completely to the survey and return their responses to the surveying organisation. Partial responses lead to response bias in results; they may be received when- the survey is administered using pen and paper.

- the survey administration method does not make all questions compulsory

- Attrition and response rates for population segments

You may want to make sure that certain groups within your patient population are adequately represented in the responses you receive or that the relative representation of different groups is reflective of your overall patient population. If so, you could monitor attrition rates and response rates broken down by- cultural and linguistic background

- Aboriginal and/or Torres Strait Islander status

- age and gender

- department or specialty of admission

- reason for attending

- Number of respondents

This is the absolute number of people who send a completed response within a defined time frame, as well as numbers of respondents within each population group you would like to present disaggregated data about. Low numbers of responses may affect the analysis process and the ability to report PREM results. - Average performance on individual questions

Consider whether you would like to set and communicate an expectation about how your organisation (or different parts of it) performs on each question. You could do this by- setting a goal for the average of all responses returned within a defined time frame, across all questions (this will require setting up a scoring system beforehand – see Step 3.2).

- setting a goal for the proportion of respondents selecting a particular response(s) (for example, X% select ‘always’; X+15% select ‘always’ or ‘mostly’)

- Average performance on overall (final) question

Consider whether you would like to set and communicate an expectation about how your organisation (or different parts of it) performs on the overall (final) question. You could do this by setting a goal for the proportion of respondents selecting a particular response(s) (for example, X% select ‘very good’; X+10% select ‘very good’ or ‘good’).

Baseline

If you plan to conduct a staged implementation involving a pilot study, you can use the pilot to determine the baseline measure for each of the above parameters. This helps you to decide what is achievable in your organisation in the short, medium and long term.

Note that after analysis of an initial pilot, there is an opportunity to make changes to your implementation to improve scores on each parameter. If, after a trial of the new method, scores have improved and you decide to take the new method into your full rollout, the baseline scores will now be the results from the trial of the new method.

Active consideration of PREM results and response ‘red flags’

Step 3.4 will look at ways in which PREM results can be integrated into workflows to ensure they are actively considered by relevant staff members. You may wish to monitor how often this happens when there are certain types of responses or trends in responses.

Consider whether you will establish red flag triggers for any instance of a particular response. For example, if using AHPEQS, you may set up an alert for any positive responses to the question ‘I experienced unexpected harm or distress’, or an alert only when this is accompanied by a subsequent response that staff did not discuss it with the patient.

Triggers for action

You can set absolute and/or relative scores on each parameter to define when corrective action will need to be taken:

| Triggers based on parameter value |

Consider the minimum acceptable value for each parameter (that is, the point at which you think corrective action will need to be taken). For example:

|

|---|---|

| Triggers based on changes in parameter value |

Consider the minimum acceptable change in value for each parameter over a defined time frame, below which corrective action will need to be taken. This type of trigger will need to take into account ceiling effects (so as not to create a trigger when the values are above a certain level to start with). For example:

|

Automating the triggers

Automating triggers will make the process more useful and cost-effective for your organisation. Think about:

- How you can build automated alerts into your surveying system when trigger thresholds are reached

- How the trigger alert will be delivered and when

- Who the alert will be delivered to and who is expected to take action

- How the appropriate action is coupled with delivery of the alert

- Whether there is a need for different ‘severities’ of alert – such as a traffic light system where ‘red’ indicates immediate action required and ‘amber’ indicates action required within a particular time frame.

Defining what action will be taken for each trigger

Consider how your organisation will respond if a trigger for action occurs. An example is given below, which could be worked through for each of the triggers you have identified.

| If this trigger occurs | … this action will follow (examples only) |

|---|---|

| High pre-administration attrition rates |

Identify the problem(s), for example:

Investigate the cause(s) of the problem:

Modify the survey process based on your identification and investigation of the problem. For example:

Monitor the impact of modification in the next round of results. |

Communicating expectations

Consider who you will consult and communicate with about establishing trigger thresholds, and how you will do this. For example:

- Staff responsible for sending out the survey and gathering responses will need to be consulted about the trigger thresholds and associated actions for attrition and response rates

- Staff responsible for analysing and reporting on completed responses will need to be consulted about trigger thresholds for absolute numbers of responses and proportion of completed responses by population segment

- Frontline professionals will need to be consulted about the trigger thresholds for performance on particular questions and the survey as a whole

- Senior managers will need to be consulted about appropriate actions to take when trigger thresholds are reached.

Reviewing parameters and triggers

Think about how often you will review and modify the continued appropriateness of each trigger threshold and the types of parameters monitored.

Outcome

By completing step 3.2 you will have an analysis strategy for the raw PREM data you will receive from patients.

Things to consider

This section lists the items that need to be considered in Step 3.2 to prepare an analysis strategy for the raw PREM data.

Processing and ‘cleaning’ raw data

Depending on your mode(s) of administration, different methods for processing the raw data received from patients will be required. The goal of this processing is to deal with any ambiguities in responses and get the data into a format that can then be read by analysis software.

For example, if automated scanning technology is not being used, paper surveys may require manual data entry into a database and a rule will need to be developed for interpreting unreadable, ambiguous or incomplete responses and reflecting these in the database. A rule would also need to be developed for dealing with anything written by the respondent that does not fit with the response options given (for example, a request to be contacted if that possibility has not been offered).

The cleaning process also can involve de-identification of data – such as replacing a person’s name with a unique identifier or separating their identifying details from their survey responses.

Descriptive statistics

Once the data have been ‘cleaned’, descriptive statistics can be used to summarise and describe the basic characteristics of aggregated survey response data for a cohort of patients. This is the simplest analysis strategy and can be represented in tables, charts and line graphs. Examples of descriptive statistics for a set of PREM responses might be:

- Frequency of response option choice for each question

- Trends over time in frequency of response option choice for each question.

Scoring methods

Along with simple frequency analysis, you may wish to ‘score’ responses. Scoring (coding) is a way to convert qualitative responses (such as multiple-choice survey responses) into numerical data. Applying a score means placing a numerical value on response options in a way that reflects the desirability of that response.

You do not have to apply a scoring method, but, if you do, it must be applied consistently across all responses received from patients, whatever the mode of administration. No matter what the type of response options (for example, yes/no, ‘always’ to ‘never’), the most desirable response for each question needs to be assigned the highest or lowest score in a consistent way.

Partial credit scoring

Many PREMs have most of the response options offered on a frequency scale (‘always’ to ‘never’). For most of these questions, ‘always’ is the most desirable response. Using a ‘partial credit’ system, the highest score would be applied to that response, and progressively lower scores to ‘mostly’, ‘sometimes’ etc.

Some advantages of applying partial credit scoring are that:

- Credit is given proportionately for each option, so that services which get all ‘mostly’ responses will ‘perform’ better in their overall scoring than those services which get all ‘rarely’ responses

- When the scores on each question are aggregated across a group of patients, an average score can be calculated

- If you are required to report a composite score for performance on the whole PREM, scoring can help with this process.

Some disadvantages of applying scoring and aggregation of scores are that:

- Assigning numbers to qualitative categories (such as ‘always’) can lead to misleading representation of data (for example, giving a score of 4 to ‘always’ may imply that this is twice as valuable a situation to the patient as ‘sometimes’ which gets a score of 2)

- A decision needs to be made about how to treat different types of response option (for example, yes/no vs frequency scale)

- A decision needs to be made about how to treat missing or ambiguous responses.

‘Top box’ and ‘bottom box’ scoring

Where your healthcare service scores highly on most PREM questions, it can be useful to only count ‘top box’ responses to discriminate between excellent and good experiences. This can motivate improvements towards consistent excellence. Results can then presented in terms of the proportion of all responses for that question which received a top box response (for example, ‘always’). Other responses are not broken down in reporting. Anecdotally, this approach can be more effective in catalysing quality improvement and behaviour change within an organisation than the partial credit system.

If you are using a ‘top box’ system, it can be useful to add analysis of ‘bottom box’ (that is, the least desirable) responses as well. This can help highlight problematic patterns in quality and safety that would require corrective action.

Analysis infrastructure

Think about how you will automate the analysis. This will involve some kind of database to store the raw data, with software to enable simple manual data entry and/or to enable automatic feeding in of returned electronic survey responses.

Security of stored data

Think about:

- How you will store data

- How long you will store data

- Who will have access to the data, and your arrangements for preventing access by other people

- Whether all stored data will be de-identified

- How you will communicate your security arrangements to consumers, to reassure them about the survey processes.

Outcome

By completing step 3.3 you will have decided how to present and report PREM results, the purpose of the different types of reports, who you will present them to and how often.

Things to consider

This section lists the items that need to be considered in Step 3.3 to present and report PREM data.

Your objectives for using the selected PREM, determined in Stage 1, will shape why and how you will present results and who you will report them to.

Presentation of data

Factors affecting how you present PREM results include:

- Who your audience(s) will be and what each of those audiences think is relevant and important

- Whether you are mostly looking to use the data as a resource for continuous quality and safety improvement or to report performance (it may be both)

- What level of granularity you want to display (that is, do you want to compare, for example, men vs women, ward 1 vs ward 2, orthopaedics vs general surgery)

Research has shown that there are more and less effective ways to present this type of data to different audiences.

Putting the results in the context of other patient-reported information

Using PREMs is only the starting point for understanding what is working and what is not working for your patients. PREM results must be presented in the context of other information your organisation collects about patients’ perspectives on the safety and quality of their treatment and care. Non-survey information collected from patients may include:

- Social media mentions

- Reviews on patientopinion.org.au and other healthcare review websites

- Manager or executive impromptu conversations with patients

- Complaints and compliments

- Consumer presentations to staff meetings and in staff training

- Focus groups that investigate safety and quality issues in greater depth

- Staff records of concerns raised by patients and carers

- Patient-reported incident measures

- Patient-reported outcome measures.

Using supplementary sources of information and presenting them alongside the PREM results will increase your ability to:

- Identify reasons behind PREM results for an individual patient or across a patient cohort

- Confirm or disconfirm anomalies in the PREM data.

Putting the results in the context of other safety and quality information

PREM results should also be put into the context of other safety and quality information from your organisation.

The Measurement and Monitoring of Safety Framework from The Health Foundation in the United Kingdom is an example of how an organisation can get a holistic picture of the safety and quality of its health services.

Methods of reporting

Some example methods of presenting data, and the purposes and audiences for which these methods might be appropriate, are given in the table.

Some examples methods of presenting data

| Method of reporting | Purpose of reporting | Main audience | Frequency of reporting |

|---|---|---|---|

| Live interactive dashboard | Integration of patient perspectives into day-to-day decision-making and improvement |

Clinicians Managers |

Continuous |

| Early identification of emerging safety/quality issues |

Clinicians Managers |

Continuous | |

| Identification of timely corrective action |

Clinicians Managers |

Continuous | |

| Static retrospective reports | Report organisational performance (actual and trending) to Board |

Executive Board |

Periodic, according to performance reporting cycle |

| Evidence for accreditation | Accrediting agencies | Periodic, according to performance reporting cycle | |

| Meeting contractual obligations |

Executive Funding agencies |

Periodic, according to contract requirements | |

| Interactive retrospective reports on organisation’s website | Accountability and transparency to consumers and the public |

Consumers General public Media |

Periodic |

| Issue- or population-specific reports | Monitor comparative experiences between population, condition or service groups |

Clinicians Managers |

Periodic, according to organisational quality improvement strategies |

| Monitor organisational quality and safety qualities |

Clinicians Managers |

Periodic, according to organisational quality improvement strategies |

Outcome

By completing step 3.4 you will be able to develop ideas for translating PREM results into actions that support quality and safety improvement in your services

Things to consider

This section lists the items that need to be considered in Step 3.4 to translate PREM data into improvement.

Moving from survey data to practical improvement

The claim that doing patient experience surveys and collecting patient feedback will lead to improvements in services relies on several assumptions. One study identified three assumptions:

- Assumption 1 – There are valid ways of measuring the healthcare experiences of patients for use in feedback

- Assumption 2 – Feedback of information about patients’ experiences to service providers (directly or indirectly via public reporting) stimulates improvement efforts within individuals, teams and organisations

- Assumption 3 – Improvement efforts initiated by organisations, teams or individuals lead to improvement in future patients’ experiences of care.

This step is concerned with assumptions 2 and 3. What makes it more likely that the PREM can be used to achieve meaningful change in services? What are the conditions and actions that need to be put in place to get from a patient filling out the survey to improvements in quality and safety that are noticeable to a patient? Researchers have identified some of the barriers and enablers of the meaningful use of patient experience (and safety and quality) data.

Consumer factors influencing collection of meaningful data

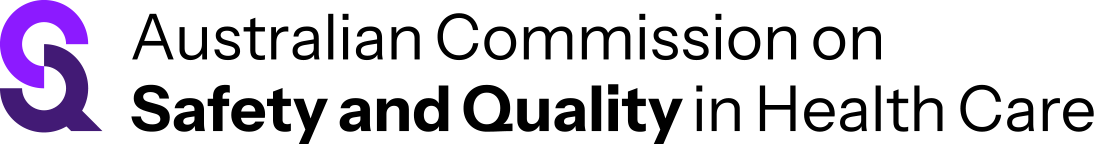

A study by De Brún et al. points out that there is no likelihood of getting meaningful patient experience data if patients do not see the point of providing it, do not feel empowered to provide it, or if it is difficult for them to fill out the survey. They developed a model of barriers and facilitators for consumers providing their feedback on the safety of the service they used.

Source: De Brún et al. (2017)

Factors affecting staff use of data for improvement

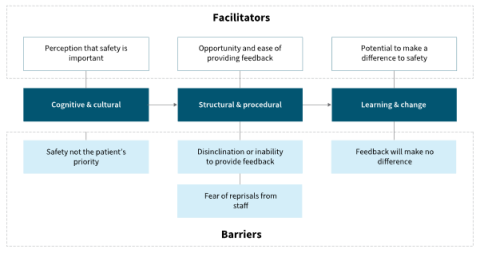

Research in the United Kingdom found that healthcare staff often find it difficult to make use of patient survey data. The research highlighted the complexity of the process needed to get from the point where staff receive patient feedback to the point where they respond to it by making changes to improve safety and quality. Required conditions are that:

- Staff must exhibit the belief that ‘listening to patients is a worthwhile exercise’

- Local teams need ‘adequate autonomy, ownership and resources to enact change’

- Where high level or inter-departmental support is required, there must be ‘organisational readiness to change’.

OR = organisational readiness; SL = structural legitimacy

Source: Sheaff et al. (2002)

Organisational and cultural factors influencing meaningful use of data

A literature review examined how large-scale patient experience survey results are used at a local level. They showed that translating these results into effective action and improvement depends on the following organisational and cultural factors:

- Sufficient resources in terms of knowledge, time and personnel to produce and present good quality data

- Positive attitude of staff towards patient experience information

- Effective and tailored presentation of results to staff – not just written feedback

- Common engagement and understanding from all professions in an organisation

- High-quality data collection and analysis methods, easily understood results, system to follow up results.

Davies and Cleary also identified the factors affecting the use of patient survey data in quality improvement. Although dated, this study presents a useful summary of barriers and enablers to the translation of survey data into practical change. These included:

- Organisational barriers

- competing priorities

- lack of supporting values for patient-centred care

- lack of quality improvement infrastructure

- Organisational promoters

- developing a culture of patient centredness

- developing quality improvement structures and skills

- persistence of quality improvement staff over many years

- Professional barriers

- clinical scepticism

- defensiveness and resistance to change

- lack of staff selection, training and support

- Professional promoters

- clinical leadership

- selection of staff for their ‘people skills’

- structured feedback of results to teams or individuals

- Data-related barriers

- felt lack of expertise with survey methods

- lack of timely feedback of results

- lack of specificity and discrimination

- uncertainty over effective interventions or rate of changes

- lack of cost-effectiveness of data collection.

Integrated workflows

When setting up an electronic interface to present PREM results within the organisation (whether this is retrospective or real-time information), consider building in workflows to make it easier to act on the results. For example, when one of the trigger thresholds developed earlier in this stage is reached, particular actions could be prompted and monitored for completion. Another way to integrate quality improvement into the workflow is to provide suggestions for action in different parts of the organisation, even where trigger thresholds are not reached – and even if the results are mostly very good overall.

Analysis and reporting of results can be part of an automated electronic workflow consisting of:

- Identification of consenting patients in patient administration systems

- Extraction of eligible patient demographics from patient administration systems

- Distribution of the survey to eligible patients

- Entry of returned responses into database

- Quality checking and cleaning of data

- Scoring of data

- Descriptive statistics generation

- Application of any relevant statistical tests

- Presentation and reporting of results to different audiences (including live dashboards).

Feedback to consumers

It is good (though rare) practice to demonstrate to consumers who have taken the time to fill out a survey that their time has been well spent. Some options for doing this include:

- Presenting ‘real life’ evidence of previous or ongoing improvements stimulated or informed by patient experience survey results (either when sending the survey or after receiving a completed response)

- Asking consumers if they would be willing to share their experiences with frontline staff in a staff meeting or training

- Using consumer focus groups to further investigate a pattern in PREM data – to get to the reasons behind a problem and to help with designing solutions.

Engaging consumers as partners with frontline staff in reviewing PREM results in workshop environments to design improvements is a way to take the feedback and learning loop to a more meaningful level. Resources for experience-based co-design are available from the Point of Care Foundation.

Communities of practice

Setting up a regular forum for interested consumers, staff, managers and the executive to review PREM results together is a way to ensure that all stakeholders collaborate to achieve patient-focused safety and quality improvement. This could be a virtual or face-to-face community of practice inviting consumer and frontline staff input to identify, design, record and evaluate changes to processes, structures and practices. An example of a data-driven collaborative learning model, using a different type of healthcare data, can be seen in the work of Nelson et al.

Resources and references

- Aligning Forces for Quality. Patient experience of care: inventory of improvement resources. Washington, DC: Robert Wood Johnson Foundation; 2014.

- Baldie D, Guthrie B, Entwistle V, Kroll T. Exploring the impact and use of patients’ feedback about their care experiences in general practice settings—a realist synthesis. Family Practice 2017;35(1):13–21.

- Berwick D, James B, Coye M. Connections between quality measurement and improvement. Medical Care 2003;41(1):I30–8.

- Davies E, Cleary P. Hearing the patient's voice? Factors affecting the use of patient survey data in quality improvement. Quality and Safety in Health Care 2005;14(6):428–32.

- De Brún A, Heavey E, Waring J, Dawson P, Scott J. PReSaFe: a model of barriers and facilitators to patients providing feedback on experiences of safety. Health Expectations 2017;20(4):771–8.

- Gleeson H, Calderon A, Swani V, Deighton J, Wolpert M, Edbrooke-Childs J. Systematic review of approaches to using patient experience data for quality improvement in healthcare settings. BMJ Open 2016;6:e011907.

- Haugum M, Danielsen K, Hestad Iversen H, Bjertnaes O. The use of data from national and other large-scale user experience surveys in local quality work: a systematic review. International Journal for Quality in Health Care 2014;26(6):592–605.

- Health Experiences Research Group. Making better use of patient experience data for health service improvement (US-PEx): resource book for participating frontline medical ward teams. Oxford: Nuffield Department of Primary Care Health Sciences, University of Oxford; 2017.

- Nelson EC, Dixon-Woods M, Batalden PB, Homa K, Van Citters AD, Morgan TS. Patient-focused registries can improve health, care, and science. BMJ 2016;354:i3319.

- Sheaff R, Pickard S, Smith K. Public service responsiveness to users’ demands and needs: theory, practice and primary healthcare in England. Public Administration 2002;80(3):435–52.

- Staniszewska S, Bullock I. Can we help patients have a better experience? Implementing NICE guidance on patient experience. Evidence-Based Nursing 2012;15(4):99.

- Weich S, Fenton SJ, Bhui K, Staniszewska S, Madan J, Larkin M, et al. Realist Evaluation of the Use of Patient Experience Data to Improve the Quality of Inpatient Mental Health Care (EURIPIDES) in England: study protocol. BMJ Open 2018;8:e021013.